The concept of blade computing has been around for quite some time and continues to find popularity among service providers and in big data centers.

Supermicro has a number of SuperBlade® offerings for a variety of deployment scenarios. Their most recent offerings include the latest Intel Broadwell CPUs and a wide range of both networking and storage options.

For this review Supermicro provided four of their StorageBlade SBI-7128R-C6N blades and a 10-Blade enclosure SBE-710Q-R90.

Each blade has two Intel Xeon E5-2697 v4 processors, 256 GB of memory and six hot-swappable 2.5″ disk drive bays. Three of the six drive bays accommodate NVMe disks, providing a direct PCIe path for the highest performance.

We were provided with two 2TB Intel NVMe disks plus another Intel 400GB NVMe drive to serve as the cache tier for an all-NVMe disk group. The other three slots were populated with two 1.6 TB SSDs plus a single 400 GB SSD for caching.

Hardware Details

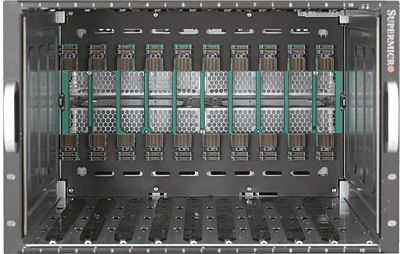

The Supermicro SuperBlade® chassis accommodates up to ten blades in the 7U enclosure. Figure 1 shows the interior of the chassis with the multi-pin connectors at the rear.

Figure 2 shows a single StorageBlade with the cover removed and the arrangement of memory and CPUs. At the right rear corner of the blade you can see two connectors which, in our case, provide networking expansion.

Networking modules install on the rear of the chassis with room for two 10 GbE and two 1 GbE switches for redundancy. Our review unit came with two 10 GbE switches plus a single 1 GbE switch.

The rear of the SuperBlade® chassis has slots for four removable 208-volt power supplies to provide redundant power. Schneider Electric (formerly APC power) provided a Smart-UPS 5000VA unit for us to test in conjunction with this review.

This particular model has both a 30-Amp and a 20-Amp connector and delivers 5KVA of power. We connected the four power supplies to a single power distribution unit (PDU) and then plugged the PDU into the UPS.

One of the nice features of this UPS is the ability to remotely control the outlets using a web browser and see the power consumption on each switch group.

The front panel of each blade includes a special connector that makes it possible to connect a VGA monitor, two USB devices and a serial port. Using this connector, we attached an external Avocent KVM to give us easy access to each blade.

The second USB port was used for software installation. It’s also possible to install an OS remotely using the remote KVM and remote media feature.

OS and Management

The primary software focus for testing on this system was VMware’s VSAN 6.2, although we did load the latest Technical Preview (TP5) of Microsoft’s Windows Server 2016 and the Nutanix community edition.

For the Windows Server 2016 TP5 install we had to remove the USB SATA DOM devices (which were used to boot ESXi) and install the OS to the smallest SSD drive.

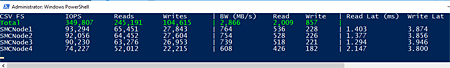

With this done, we were able to configure all four nodes to test the latest Storage Spaces Direct feature, which worked extremely well. Figure 3 shows a snapshot of IOPS taken from a PowerShell session while running the Microsoft DiskSpd tool.

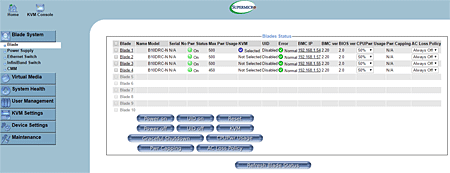

Each StorageBlade has its own baseboard management controller (BMC) as does the chassis. The chassis management mobule (CMM) (see Figure 4) provides a status page showing all installed blades and pertinent settings for each.

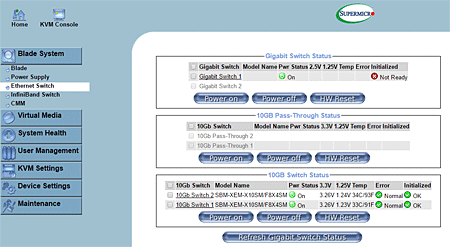

Clicking on the BMC IP address from the main status page will connect you to the BMC page for that node. The networking components also have their own status and control page as shown in Figure 5.

We’re doing a full all-flash VSAN 6.2 review later, but suffice it to say at this point the Supermicro StorageBlade server with NVMe and SSD is a screamer.

With two disk groups on each node the performance testing was the fastest we’ve seen yet on a VSAN cluster.

Each blade has two internal SATA ports that accept a SATADOM (Disk on Module) for booting ESXi. Supermicro provided two 32GB devices for each StorageBlade, more than enough for loading ESXi.

With ESXi booted, each node was configured with two 10 GbE ports and a single 1 GbE port. The two 10 GbE ports were used for VSAN and VM traffic, while the 1 GbE port was dedicated to management traffic.

This provides a full 10 GbE of bandwidth dedicated specifically to VSAN traffic and makes this box really fly.

We used essentially the same networking setup for the Windows Server 2016 configuration with 10 GbE for storage traffic and 1 GbE for management.

Finally, we tested the Nutanix Community Edition of their Acropolis hypervisor environment, which is based on KVM. It is possible to build a cluster by loading a minimum of three nodes with the base image and then adding each new node.

For the purpose of this review we loaded the software on a single node to test the management interface. Installation went without a hitch, and we were up and running in under 20 minutes.

Bottom Line

If it’s not obvious by now, you should know we found this system to be extremely powerful. With up to ten nodes in a single 7U enclosure, it packs two more nodes than the other major blade vendors fit in the same space.

The six small form factor drives were on par with the full-height blades from HP and Cisco, but the Supermicro StorageBlade can pack ten into their chassis while the competition can only fit four.

On the down side, you won’t get the same level of management software that both Cisco and HP provide with their UCS and HPE OneView products.

Pricing for the system is extremely competitive and will vary depending on your CPU, memory and disk choices.

Paul Ferrill, based in Chelsea, Alabama, has been writing about computers and software for almost 20 years. He has programmed in more languages than he cares to count, but now leans toward Visual Basic and C#.

Follow ServerWatch on Twitter and on Facebook