Scalability is a system’s ability to swiftly enlarge or reduce the power or size of computing, storage, or networking infrastructure. With the evolution of the requirements and resource demands of applications, scaling storage infrastructure provides a means of adapting to resource demands, optimizing costs, and improving the operations team’s efficiency.

Scaling up (vertical scaling) and scaling out (horizontal scaling) are key methods organizations use to add capacity to their infrastructure. To an end user, these two concepts may seem to perform the same function. However, they each handle specific needs and solve specific capacity issues for the system’s infrastructure in different ways.

What’s the difference between scaling up and scaling out?

Simply put, scaling up is adding further resources, like hard drives and memory, to increase the computing capacity of physical servers; whereas scaling out is adding more servers to your architecture to spread the workload across more machines.

Scaling up

Scaling up storage infrastructure aims to add resources supporting an application to improve or maintain ample performance. Both virtual and hardware resources can be scaled up.

In the context of hardware, it may be as straightforward as using a larger hard drive to greatly increase storage capacity. Note, though, that scaling up does not necessarily require changes to your system architecture.

Scaling up infrastructure is viable until individual components are impossible to scale anymore — making this a rather short-term solution.

When to scale up infrastructure

- When there’s a performance impact: A good indicator of when to scale up is when your workloads start reaching performance limits, resulting in increased latency and performance bottlenecks caused by I/O and CPU capacity.

- When storage optimization doesn’t work: Whenever the effectiveness of optimization solutions for performance and capacity diminishes, it may be time to scale up.

- When your application struggles to handle the complexities of a distributed system: You should consider scaling up when your application either struggles to distribute processes across multiple servers or to handle the complexities of a distributed system.

Example situations of when to scale up

A ERP system that manages various business processes

Consider a scenario where a large manufacturing company uses an enterprise resource planning (ERP) system to manage a variety of business processes. The ERP system should be capable of handling high I/O operations due to the large volumes of data being processed every day, including inventory, orders, payroll and more.

As the company grows and the amount of data increases, system performance may reduce, leading to inefficient operations. To counter these performance issues, the company can choose to scale up by adding more RAM, CPU, and storage resources to its existing server. This would increase the server’s capacity to handle the additional data and operations, resulting in improved system performance.

Machine learning (ML) workloads

Let’s take the context of a tech startup specializing in ML. The startup develops complex models for data analysis that require high computational power and memory for processing large datasets. As the company acquires more data and the complexity of models increases, the current hardware configuration becomes a limiting factor.

To mitigate this, the company can scale up its infrastructure by adding more powerful CPUs or GPUs and increasing memory and storage. The beefed-up infrastructure can support the demanding ML workloads, ensuring smooth operations and high-performance model training.

Strengths

- Relative speed: Replacing a resource such as a single processor with a dual processor means that the throughput of the CPU is doubled. The same can be done to resources such as dynamic random access memory (DRAM) to improve dynamic memory performance.

- Simplicity: Increasing the size of an existing system means that network connectivity and software configuration do not change. As a result, the time and effort saved ensure the scaling process is much more straightforward compared to scaling out architecture.

- Cost-effectiveness: A scale-up approach is cheaper compared to scaling out, as networking hardware and licensing cost much less. Additionally, operational costs such as cooling are lower with scale-up architectures.

- Limited energy consumption: As less physical equipment is needed in comparison to scaling out, the overall energy consumption related to scaling up is significantly lessened.

Weaknesses

- Latency: Introducing higher capacity machines may not guarantee that a workload runs faster. Latency may be introduced in scale-up architecture for a use case such as video processing, which in turn may lead to worse performance.

- Labor and risks: Upgrading the system can be cumbersome, as you may, for instance, have to copy data to a new server. Switchover to a new server may result in downtime and poses a risk of data loss during the process.

- Aging hardware: The constraints of aging equipment lead to diminishing effectiveness and efficiency with time. Backup and recovery times are examples of functionality that is negatively impacted by diminishing performance and capacity.

Scaling out

Scale-out infrastructure replaces hardware to scale functionality, performance, and capacity. Scaling out addresses some of the limitations of scale-up infrastructure, as it is generally more efficient and effective. Furthermore, scaling out using the cloud ensures you do not have to buy new hardware whenever you want to upgrade your system.

While scaling out allows you to replicate resources or services, one of its key differentiators is fluid resource scaling. This allows you to respond to varying demand quickly and effectively.

When to scale out infrastructure

- When you need a long-term scaling strategy: The incremental nature of scaling out allows you to scale your infrastructure for expected long-term data growth. Components can be added or removed depending on your goals.

- When upgrades need to be flexible: Scaling out avoids the limitations of depreciating technology, as well as vendor lock-in for specific hardware technologies.

- When storage workloads need to be distributed: Scaling out is perfect for use cases that require workloads to be distributed across several storage nodes.

Example situations of when to scale out

Streaming services ensuring they provide a smooth user experience

A company like Netflix or YouTube, which provides streaming services to millions of users worldwide, faces unique challenges. With a growing global user base, it’s not practical to rely on a single server or a cluster in one location.

In such a scenario, the company would scale out, adding servers in various global regions. This strategy would improve content delivery, reduce latency, and provide a consistent, smooth user experience. This is often executed in conjunction with content delivery networks (CDNs) that help distribute the content across various regions.

Social networking platforms

A rapidly growing social networking platform that must manage an influx of user-generated content has to store and retrieve vast amounts of data, including user profiles, posts, and multimedia content. Scaling up might provide temporary relief. However, as the platform grows and attracts more users, scaling out becomes a necessity.

By adding more servers to the network, you allow the platform to distribute the data storage and retrieval operations across servers. This makes sure that high-volume, high-velocity data typical of such platforms is handled efficiently. Scaling out also ensures high availability and redundancy, improving overall user experience.

Strengths

- Embraces newer server technologies: Because the architecture is not constrained by older hardware, scale-out infrastructure is not affected by capacity and performance issues as much as scale-up infrastructure.

- Adaptability to demand changes: Scale-out architecture makes it easier to adapt to changes in demand, since services and hardware can be removed or added to satisfy demand needs. This also makes it easy to carry out resource scaling.

- Cost management: Scaling out follows an incremental model, which makes costs more predictable. Furthermore, such a model allows you to pay for the resources required as you need them.

Weaknesses

- Limited rack space: Scale-out infrastructure poses the risk of running out of rack space. Theoretically, rack space can get to a point where it cannot support increasing demand, showing that scaling out is not always the approach to handle greater demand.

- Increased operational costs: The introduction of more server resources introduces additional costs, such as licensing, cooling, and power.

- Higher upfront costs: Setting up a scale-out system requires a sizable investment, as you’re not just upgrading existing infrastructure.

Scale up or scale out? How to decide

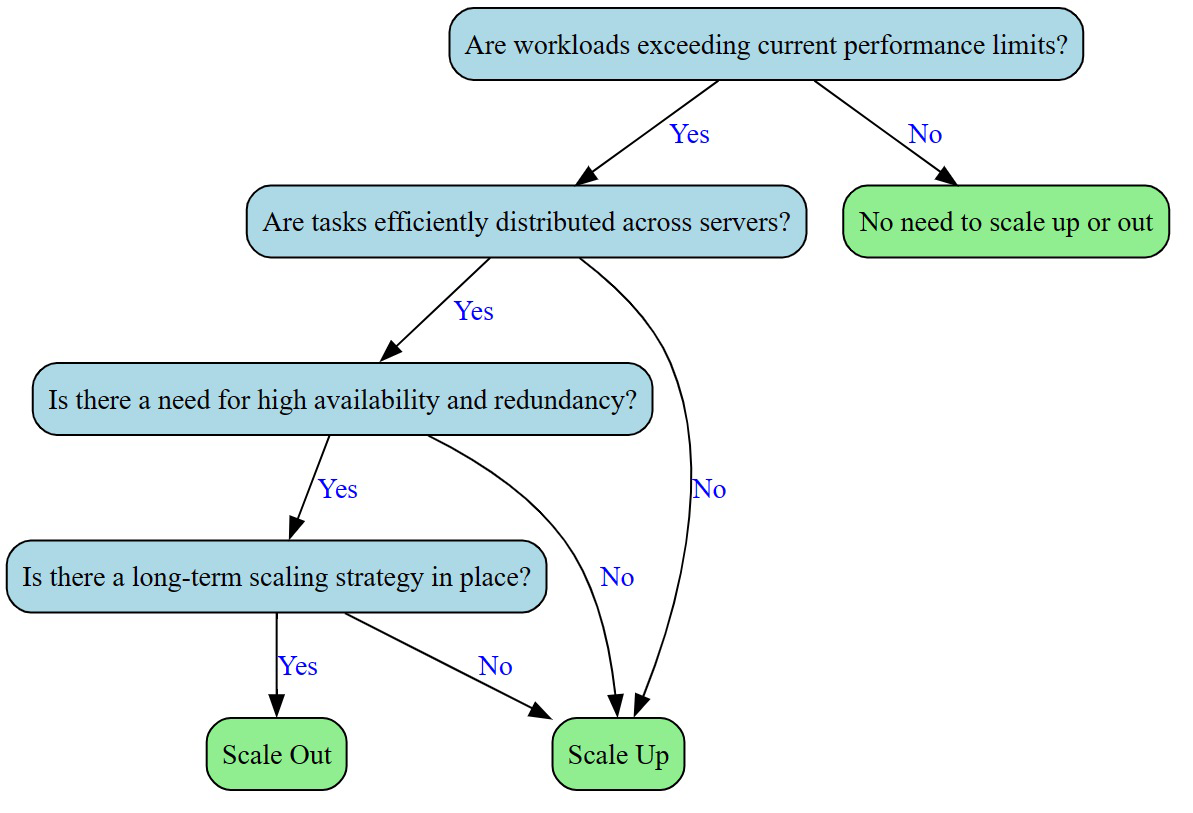

So, should you scale up or scale out your infrastructure? The decision tree below will help you more clearly answer this question.

Bottom line: Scale up and scale out

Deciding between scaling up and scaling out largely depends on your organization’s specific needs and circumstances. Vertical scaling is ideal for situations where a single system can meet the demand, like with high-performance databases. However, this approach has its limits in terms of hardware capabilities and could lead to higher costs over time.

Conversely, horizontal scaling works best when the workload can be distributed efficiently across multiple servers. This is often preferred for handling web traffic surges or managing user-generated data on platforms like social media sites. Yet, this method can introduce complexities related to managing the distributed system.

In practice, many organizations use a hybrid approach, maximizing each server’s power through scaling up, then expanding capacity through scaling out. Ultimately, the choice between the two strategies should take into account your application’s requirements, growth projections and budget. Remember, the goal is to align your scaling strategy with your business objectives for optimal performance.

One great option for scaling your storage is network-attached storage (NAS) — here’s a guide to the best free NAS solutions to manage and migrate your data.

Featured Partners: IT Software

Zoho Sprints

The current fast-paced software industry has reached where it has with a major chunk of credit to agile concepts. Zoho Sprints is an all-encompassing agile project management tool that lets you deliver successful IT projects. In addition to the fundamentals, Sprints also comes with thoughtful features like a dedicated CI/CD pipeline and seamless integration with Github, Jenkins, Azure Devops and more. Commit yourself to excellent delivery, get Zoho Sprints today!

NordLayer

The importance of cybersecurity rises with the growing numbers of cyber-attacks and malicious activities businesses face every second. Securing the data and constantly mitigating external threats like malware, phishing, or unfiltered websites is a challenge easier to overcome with advanced solutions. NordLayer is designed and developed with Secure Access Service Edge (SASE) architecture and Zero Trust model in mind to adhere to the most comprehensive and contemporary security landscape.

Zoho Assist

Zoho Assist is a premium remote support tool tailored to the needs of IT professionals. This IT software solution enables unified support and efficient service management. It's versatile, compatible with various workplace environments, and easy to integrate with top help desk and live chat tools. With industry-standard features, Zoho Assist empowers organizations to optimize their IT support, work efficiently, and elevate client service standards.

Site24x7

Site24x7 offers unified cloud monitoring for DevOps and IT operations, and monitors the experience of real users accessing websites and applications from desktop and mobile devices. In-depth monitoring capabilities enable DevOps teams to monitor and troubleshoot applications, servers and network infrastructure, including private and public clouds. End-user experience monitoring is done from more than 110 locations across the world and various wireless carriers.