Both VMware and Microsoft have been in the server virtualization scene for a number of years — VMware for more than a decade now, while Microsoft entered into it relatively recently.

It is imperative for IT workers or organizations to understand the differences between the Microsoft Hyper-V and VMware vSphere architectures as well as the advantages and disadvantages each technology offers before they propose the virtualization solutions to their customers or employees — or before using it in a production environment.

There are a number of important components to consider when choosing either VMware vSphere or Microsoft Hyper-V, but from an architecture standpoint of view, the following components play an important role when it comes to choosing the right server virtualization product:

In general, there are three types of virtualization architectures virtualization vendors refer to. They are:

While explaining all three types of virtualization architecture is out of the scope of this article, the one that we’ll primarily focus on in this article is the Type 1 VMM. Type 1 VMM is what Microsoft Hyper-V and VMware are using to implement their server virtualization technologies.

While explaining all three types of virtualization architecture is out of the scope of this article, the one that we’ll primarily focus on in this article is the Type 1 VMM. Type 1 VMM is what Microsoft Hyper-V and VMware are using to implement their server virtualization technologies.

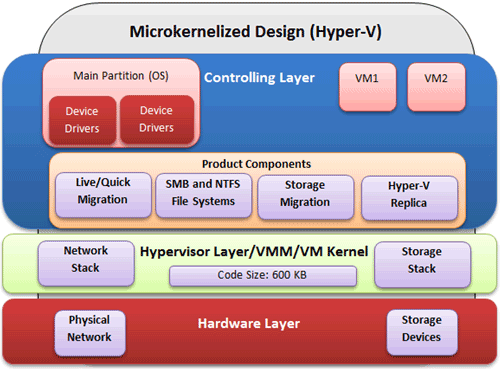

Type 1 VMM can be further divided into two subcategories: Monolithic Hypervisor Design and Microkernelized Hypervisor Design. Both designs have three layers in which different components of virtualization product operate.

The lowest layer is called the “Hardware Layer” and is virtualized by the “Hypervisor layer” running directly on top of the “Hardware Layer.” The top layer is called “Controlling Layer.” The overall objective of the “Controlling Layer” is to control the components running in this layer as well as provide the necessary components for virtual machines to communicate with the “Hypervisor Layer.”

Note: The “Hypervisor layer” is sometimes referred as “VMM Layer” or “VM Kernel Layer.”

The Microkernelized Hypervisor Design is used by Microsoft Hyper-V. This design does not require the device drivers to be part of the Hypervisor layer — the device drivers operate independently and run in the “Controlling Layer” as shown in the image below:

The Microkernelized Hypervisor Design provides the following advantages:

Property of TechnologyAdvice. © 2025 TechnologyAdvice. All Rights Reserved

Advertiser Disclosure: Some of the products that appear on this site are from companies from which TechnologyAdvice receives compensation. This compensation may impact how and where products appear on this site including, for example, the order in which they appear. TechnologyAdvice does not include all companies or all types of products available in the marketplace.